Artificial intelligence is now part of daily life for billions of people, but public confidence in its use is waning. A global study by KPMG and the University of Melbourne shows that while two-thirds of people regularly use AI, more than half remain wary of trusting it.

AI Adoption Outpaces Public Trust

Artificial intelligence has moved from novelty to necessity. Since the launch of ChatGPT in late 2022, AI tools have been rapidly integrated into everyday life, work, and education worldwide. Today, two out of three people intentionally use AI for personal, professional, or academic purposes, reflecting the integration of this technology in modern society.

However, trust in AI has not kept pace with adoption. A global study by the University of Melbourne and KPMG, surveying more than 48,000 people across 47 countries, reveals that 54% of respondents remain wary about trusting AI systems, particularly regarding safety, security, and ethical use.

The findings highlight the widening gap between people relying on AI and their confidence about its broader impact on society.

While most accept AI's presence, public concerns about misinformation, job losses, privacy risks, and inadequate regulation are shaping a more cautious global outlook.

AI Is Everywhere Now

Artificial intelligence is no longer a niche technology. According to the 2025 global study, 66% of people intentionally use AI regularly, with 38% using it on a daily or weekly basis. Among employees, 58% use AI at work, while 83% of students rely on AI tools in their studies.

The surge is most pronounced in emerging economies. Countries such as Nigeria, India, Egypt, China, Saudi Arabia, and the UAE report AI usage rates above 90%, compared with 58% in advanced economies.

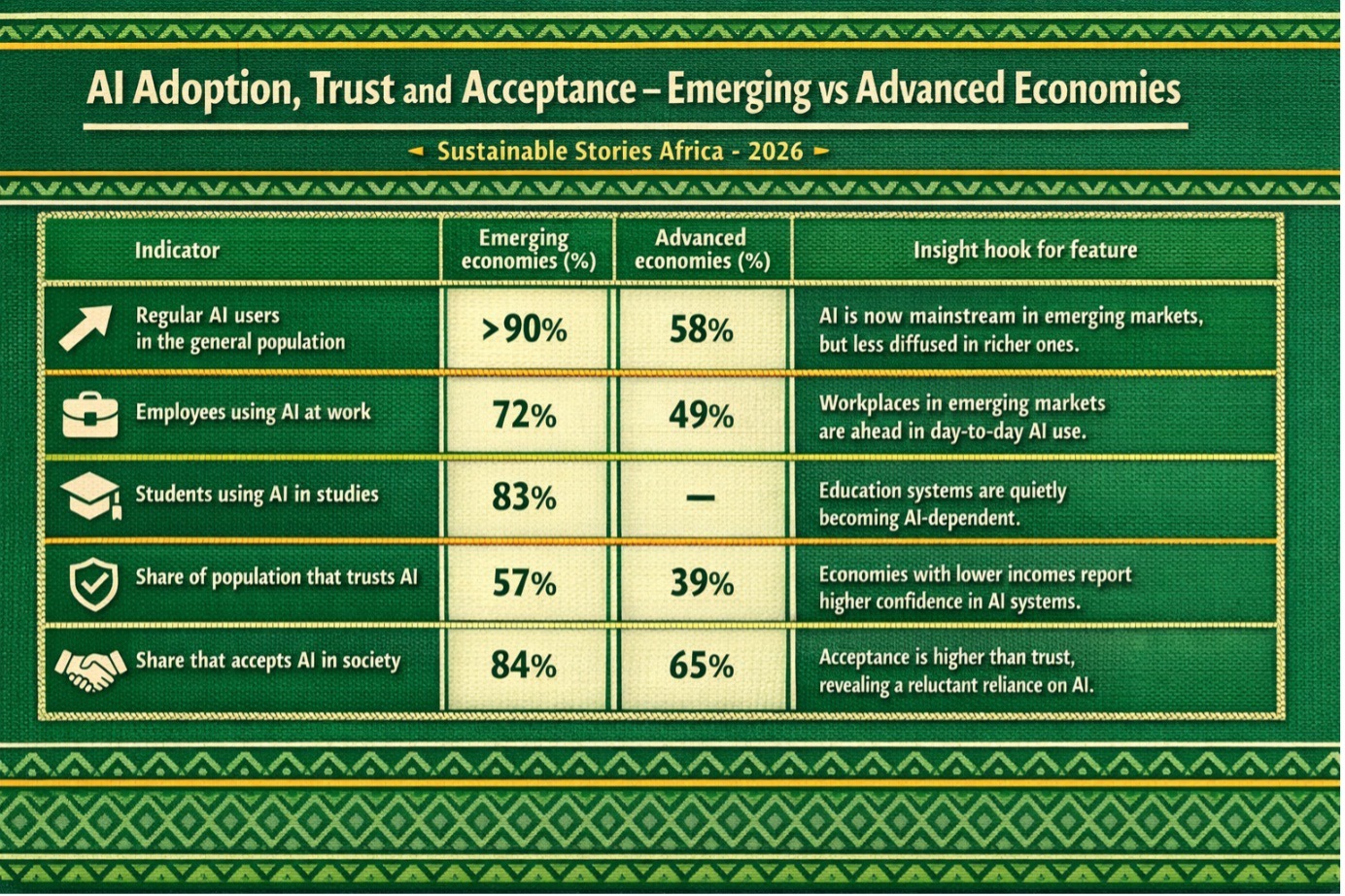

AI Adoption, Trust and Acceptance – Emerging vs Advanced Economies

Indicator | Emerging economies (%) | Advanced economies (%) | Insight hook for feature |

|---|---|---|---|

Regular AI users in the general population | >90% | 58% | AI is now mainstream in emerging markets, but less diffused in richer ones. |

Employees using AI at work | 72% | 49% | Workplaces in emerging markets are ahead in day-to-day AI use. |

Students using AI in studies | 83% | – | Education systems are quietly becoming AI-dependent. |

Share of population that trusts AI | 57% | 39% | Economies with lower incomes report higher confidence in AI systems. |

Share that accepts AI in society | 84% | 65% | Acceptance is higher than trust, revealing a reluctant reliance on AI. |

These countries also show higher AI literacy, training, and confidence in using AI tools effectively.

AI's accessibility has fueled this rapid uptake. Most users rely on free, publicly available generative tools such as ChatGPT, rather than employer-provided systems.

The use of AI technology cuts across finance, healthcare, education, manufacturing, agriculture, and media, delivering efficiency gains and expanding access to information.

But widespread use has not translated into deeper understanding. Only 61% of people have received no formal AI training, and nearly 50% admit they have limited knowledge of how AI works or when it is being used.

Many people are unaware that common tools such as social media, facial recognition, and virtual assistants rely on AI.

Trust Is Under Pressure

Despite high adoption, over 50% of respondents remain wary of trusting AI. Public scepticism focuses on safety, data security, ethical use, and social impacts, even as people trust AI's technical ability to generate useful outputs.

The trust gap is most obvious in advanced economies, where only 39% of people trust AI, compared with 57% in emerging economies. Acceptance follows a similar pattern: 65% in advanced economies versus 84% in emerging ones.

Emotions toward AI are mixed. Most people report feeling both optimistic and worried, reflecting enthusiasm for the benefits alongside anxiety about its risks.

Trust has also declined since 2022, even as adoption has increased, suggesting that greater exposure has made people more aware of AI's limitations, such as hallucinated outputs, bias, and misuse.

Confidence in who develops and governs AI also varies. Universities, researchers, and healthcare institutions are trusted more than governments or large technology companies. In advanced economies, confidence in big tech is especially low, highlighting concerns about corporate accountability.

Benefits Are Real, But Risks Are Too

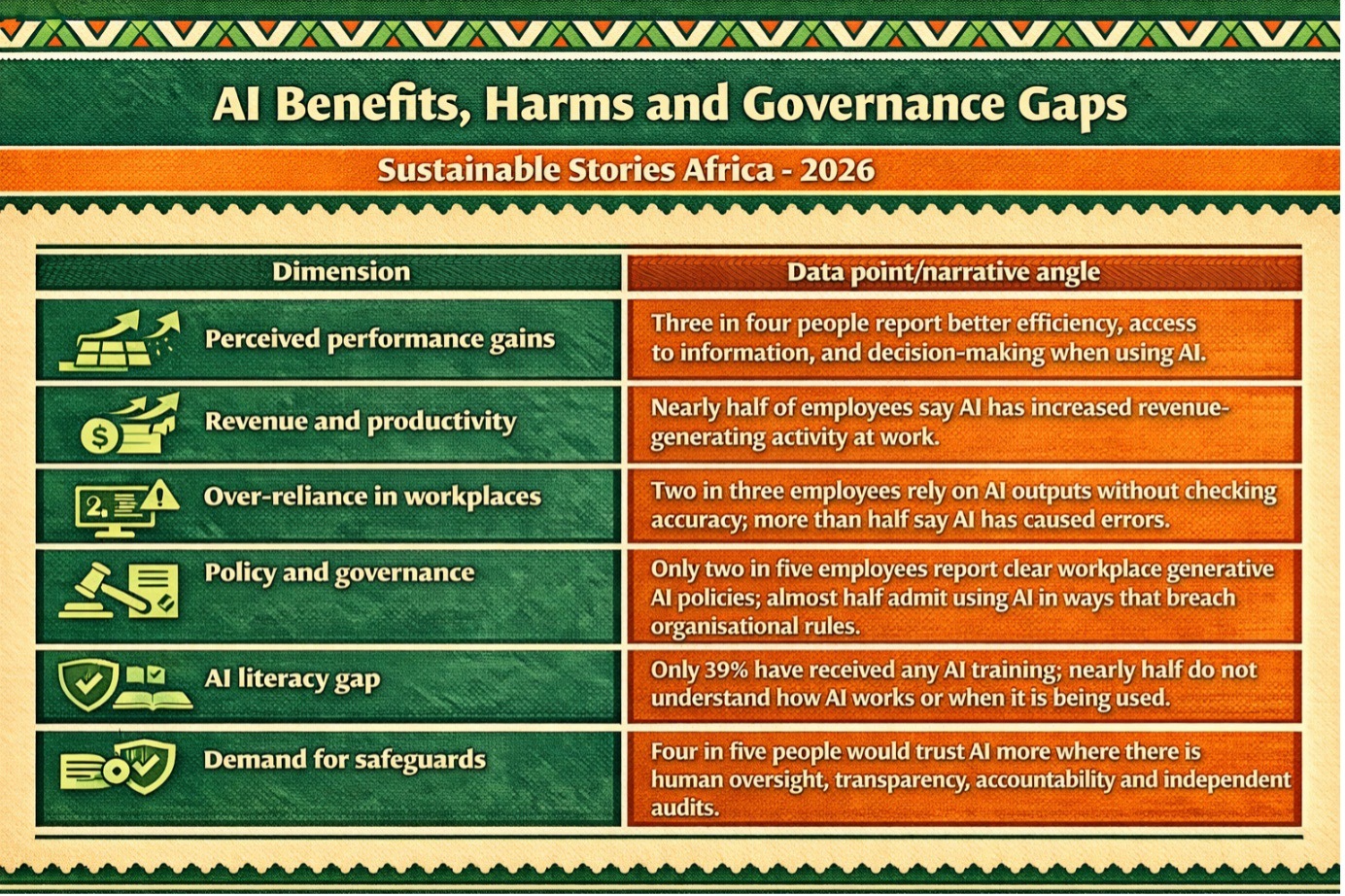

AI is delivering measurable benefits across society. Three in four people report improvements in efficiency, access to information, innovation, decision-making, and personalisation of services.

Employees say AI helps them work faster, make better decisions, and develop new skills. Nearly half report that AI has increased revenue-generating activity.

Students report similar gains. AI tools accelerate learning efficiency, reduce workload and stress, and support personalised education. In healthcare, AI is helping to improve diagnostics, including cancer detection, and enhance treatment planning.

However, these benefits come with serious trade-offs. Four in five people are concerned about negative outcomes including misinformation, cybersecurity risks, privacy breaches, job displacement, deskilling, and loss of human interaction. Two in five say they have personally experienced or observed such harms.

Public opinion is divided:

- 42% believe AI's benefits outweigh the risks

- 32% believe the risks outweigh the benefits

- 26% say they are balanced

Concerns about AI-generated misinformation are especially strong. About 87% of respondents want laws to combat false content and stronger fact-checking by social media and news platforms, citing risks to public trust and election integrity.

AI at Work Brings Mixed Impacts

In the workplace, the adoption of AI is accelerating. Three in five employees now use AI regularly, with emerging economies leading at 72%, compared with 49% in advanced economies. Most employees rely on general-purpose generative tools rather than employer-approved systems.

The performance benefits are clear. Workers report higher efficiency, better access to information, improved decision quality, and increased innovation. Yet AI is also reshaping how people collaborate.

Half of employees say they use AI instead of consulting colleagues or supervisors. One in five reports reduced communication and teamwork, raising concerns about a decline in human connection in AI-augmented workplaces.

Governance has not kept pace with the rate of adoption. Almost half of employees admit to using AI in ways that breach organisational policies, including uploading sensitive company data to public tools. Two in three rely on AI outputs without evaluating accuracy, and more than half say AI has caused work errors.

Only two in five employees report having clear workplace policies related to the use of generative AI. Many are not open about their use of AI, presenting AI-generated content as their own. This lack of transparency highlights growing risks around accountability, data protection, and quality control.

Students Depend on AI Heavily

Among students, the use of AI is even more widespread. Nearly 83% of respondents claim to use AI regularly, with half relying on it on a weekly or daily basis. The benefits mirror those seen at work: faster research, improved writing, reduced stress, and personalised learning.

However, problematic use is common. Two-thirds of students admit to using AI inappropriately, often hiding their use and relying on AI outputs without critical evaluation. Three-quarters say they could not complete their work without AI, and four in five put in less effort knowing AI is available.

Only half of the education providers offer clear policies, training, or resources on the responsible use of AI. This raises long-term concerns about critical thinking, collaboration, academic integrity, and skills development for the future workforce.

Regulation Lags Behind Expectations

Public demand for AI regulation is strong. 70% of respondents believe AI should be regulated, yet only 43% think current laws are adequate. Most people are unaware of existing AI-related legislation in their countries.

Respondents favour a multi-layered regulatory approach:

- 76% support international laws

- 69% want national government regulation

- 71% favor co-regulation between government and industry

There is also overwhelming support for stricter rules on AI-generated misinformation, with expectations that both governments and media platforms take responsibility for content integrity.

Trust improves when assurance mechanisms are in place. Four in five people say they would be more willing to trust AI systems if there were clear safeguards, such as human oversight, transparency, accountability, responsible AI policies, and independent audits.

Literacy Is the Missing Link

AI literacy is lagging the adoption of AI. Only 39% of people have received any AI-related training, and nearly half say they do not understand how AI works or when it is being used.

However, literacy matters. Higher AI knowledge is linked to greater trust, acceptance, and more effective use. People with AI training report fewer negative experiences and more performance benefits.

Emerging economies outperform advanced ones in AI literacy, training, and confidence. Nigeria, India, Egypt, China, Saudi Arabia, and the UAE rank highest across all three indicators. This positions them to accelerate innovation and gain competitive advantages in the global AI economy.

AI Benefits, Harms and Governance Gaps

Dimension | Data point/narrative angle |

|---|---|

Perceived performance gains | Three in four people report better efficiency, access to information, and decision-making when using AI. |

Revenue and productivity | Nearly half of employees say AI has increased revenue-generating activity at work. |

Over-reliance in workplaces | Two in three employees rely on AI outputs without checking accuracy; more than half say AI has caused errors. |

Policy and governance | Only two in five employees report clear workplace generative‑AI policies; almost half admit using AI in ways that breach organisational rules. |

AI literacy gap | Only 39% have received any AI training; nearly half do not understand how AI works or when it is being used. |

Demand for safeguards | Four in five people would trust AI more where there is human oversight, transparency, accountability and independent audits. |

Path Forward: Rebuilding Trust in AI

The global AI revolution is already reshaping work, education, and daily life. But trust is falling as quickly as adoption is rising. To close this gap, governments, businesses, and educators must invest in stronger regulation, clearer governance, and widespread AI literacy.

With better safeguards, transparent policies, and human-centred design, AI can deliver lasting social and economic value — without eroding public confidence or institutional integrity.